Processing Equipment: Simulation Finds A New Model

Powerful data analytics such as artificial intelligence (AI) and machine learning (ML) are profoundly affecting the development of process simulation technologies. However, vendors including KBC Advanced Technologies, Walton-on-Thames, U.K., and Aspen Technology, Bedford, Mass., are finding that lots of users don’t fully appreciate how simulation is evolving.

A recent KBC user webinar looking at the evolution of process simulation pointed up the disparity. For example, when asked if simulation technology has kept up with advances in software and information technology (IT) technology, nearly 56% of attendees said it hadn’t.

“I voted no, too,” admits the webinar’s host, Andy Howell, KBC’s executive vice president, technology.

“I don’t think any of the simulation vendors have done enough in the last ten years. It’s definitely time to take more notice of third-party solutions such as block-chain, automation and cloud-based technologies, etc. I think that with the advances in data science such as AI and ML rather than just computer science we are on the threshold of a second wave of innovation in process simulation. KBC has been at the forefront of the first wave in thermodynamic computation and intends to be front and center in the second,” he notes.

However, Howell is keen to point out that process simulation isn’t obsolete — not least because data analytics technologies still have severe limitations with the nonlinear systems that make up many chemical processes.

“The future will be a sensible combination of these things, a hybridization aimed at real world problems [Figure 1],” he adds.

Another poll during the webinar found that half of attendees feel the biggest challenge for process simulation today is integration into other systems. Finding and accessing the data needed for a model came second, with 35% of the vote.

“The integration result was a little bit of a surprise as vendors have made great strides in this area. However, it’s a wake-up call to any simulation vendors who think they can do everything. They can’t and should never think that they can. Using third-party technologies in cloud-based systems allows users to mix and match simulators and the many add-on analytic tools available today in the way they want,” emphasizes Howell.

Third-Party Benefits

KBC itself has long associations with many third-party technology providers, the most recent a partnership announced in February giving Petro-SIM users access to the rigorous gas/liquid separation modeling supplied by MySep, Midview City, Singapore.

It’s a relationship designed to improve separation expertise at oil companies and engineering/procurement/construction (EPC) contractors, both of which suffer from many disparate and unvalidated design engineering practices for vessels and separation internals.

“Poor separator design causes excessive carryover of liquid from separation vessels resulting in rapid or progressive damage to downstream compressors, contactors, and other pre-treatment facilities. This leads to extended and/or unplanned shutdowns causing operational losses,” notes Russell Byfield, KBC global simulation business leader.

KBC also is seeking tie-ups covering a raft of other modeling technologies, including electrolytes, environmental emissions, energy management, bioreactor diesel and synthetic ethanol — so-called e-fuels.

Almost 40% of webinar attendees cited self-tuning as the most important issue for process simulation in the future — well ahead of self-building and closed loop automation. This doesn’t surprise Howell at all.

It’s one of the reasons KBC parent company Yokogawa, Tokyo, recently signed a partnering deal with enterprise AI developer C3AI, Redwood City, Calif.

“It puts their AI Suite capability into Petro-SIM, giving it the ability to self-tune using whatever quality of data is present in a facility. Ultimately, self-build models will follow on from this,” explains Howell.

The company is pursuing two case studies using this technology, one being the tuning of reactor catalysts in refining.

“It’s almost folklore how difficult it is to take reactor data, tune it to the reactor model and then be able to predict the operation of the catalyst under current and future conditions with different oils and loads. It’s the first time we’ve really auto-tuned the model... in a reactor/catalyst environment — which is probably one of the most complex situations we get in processing,” he notes.

KBC is using the technology in a pilot with several oil companies who have had problems with reactor operations. The challenge for KBC is to find the inherent issues causing these difficulties in an automated way, before they even appear.

“The pilot’s been running for about eight months now and it’s looking very good. It’s due to conclude perhaps in November or December and after that we will be looking to release a commercial product as a bolt-on to our existing simulator,” says Howell.

The second case study involves simulations of crude pre-heat trains; one aim is determining which heat exchangers need cleaning first: “So we’re doing the hardest units first, with the aim eventually of applying these simulations to a refinery-wide model and ultimately replacing — or supplementing — the LP [refinery linear program] model.”

KBC’s overall goal is to provide a platform with a petrochemical-wide application, with plug-ins for niche applications from other vendors. Its vision includes cloud-agnostic building blocks, sets of integrated event-based work and data flows, time-series data storage, and use of AI tools to aid model management and updates. It must be self-building, self-sustaining and ultimately in a 3D game style — like Minecraft, declares Howell.

“The digital twin is one step along the way to what I think will be a digital triplet: data analytics and process simulation connected into a seamless, VR [virtual reality]-type environment. It’s that hybridization that will drive the assets of the future,” Howell concludes.

Bringing AI To Process Engineers

AspenTech also is focused on technology hybridization. It recently launched the third of three tools in the Hybrid Models family. These specifically are designed for use by process and plant engineers to rapidly turn plant and simulation data into AI-based ML models without requiring any significant data science understanding.

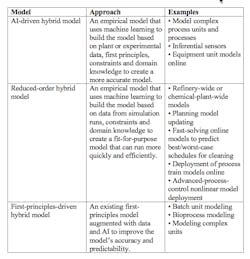

They combine first-principles physio-chemical knowledge with AI-based empirical models and intuitive, automated workflows to deploy as operational applications (Table 1).

The company piloted Hybrid Models with 96 companies, including 34 chemical and specialty chemical manufacturers and four with integrated energy and petrochemicals operations.

Table 1. The target uses of the models differ. Source: AspenTech.

“The chemicals companies involved were diverse in size and products. We had 15 out of the top 20 largest chemical producers worldwide. We also had representation from global chemical companies, as well as regional players in the U.S., Latin America, Europe, Middle East, Africa, and Asia,” says Gerardo Munoz, product marketing manager, engineering. “We received very good feedback,” he adds.

“Aspen Hybrid Models are a major advance in the field of chemical engineering. Hybrid models are a major step forward in bringing together AspenTech’s process models and machine learning and are a game changer in process engineering and plant improvement,” says Karuna Potdar, vice president, technology center of excellence, Reliance Industries, Mumbai, India.

“Using the hybrid model, we were able to create a model that can reproduce real plant data more accurately than the conventional reformer model. We were able to create a highly accurate model in a short period of time,” comments Takuto Nakai, who is in the production department of Nissan Chemical, Tokyo.

A third chemical company described the models as a step in the right direction for mission-critical process units, while two others praised the ease and simplicity of the workflows and their excellent diagnostics and analysis capabilities.

More detailed facts and figures about the three Hybrid Models emerged during AspenTech’s Optimize 21 virtual user conference in May. Nissan Chemical estimated it decreased steam use by up to 1% in ammonia plants by accurately calibrating reactor kinetics and temperature profiles using models that were created in half the time of a conventional model. Dow reduced the iterations/time for convergence in simulation models by 36% using Hybrid Models as surrogate models to initialize larger simulations. Sabic obtained useful insights on how to improve final product quality after accurately predicting impurity concentration in a distillation process.

Other examples cited by Munoz include:

• a leading European refiner addressed an issue with heat exchanger fouling that was costing $1 million/yr;

• a refining and petrochemical company in the Middle East updated planning models in half the time and estimated gains of 11.3¢/bbl from improved planning model accuracy;

• Lummus Technology reduced time in design and optimization of a gas processing plant by 50%;

• and an engineering consulting company in North America that focuses on oil and gas is using one of the models to optimize an upstream asset, estimating a net present value increase of 8%.

“There is a lot of excitement, and we are looking forward to seeing the benefits as they are being adopted for different use cases. There has been very positive feedback on first-principles-driven Hybrid Models in chemicals, as this modeling technology can be very useful to tune kinetics of reactor models and represent real behavior — for example, real mixing — without having to do a lot of manual tuning. The most positive feedback from users is that this can be done within software with which they are already familiar such as Aspen Plus and Aspen HYSYS, making it very easy for them to use,” states Munoz.

Users have offered inputs on desired enhancements to the hybrids; most feedback focused on usability and how the process could be improved to facilitate the creation of simulation models.

“Some of the feedback and use cases identified by our customers were related to how to use these models to solve key issues in chemicals. The most common cases were modeling key polymer properties, waste reduction and improved product quality, and model creation for special processes such as aromatic upgrading units,” adds Munoz.

Like KBC, AspenTech also is forging links with third-party suppliers. The most recent tie-up is with Larsen & Toubro Infotech (LTI), Mumbai. LTI’s cloud-managed services will allow access of AspenTech’s performance engineering desktop technologies via the cloud.

In addition, AspenTech has bought some companies, for example, industrial analytics and asset optimization specialist Camo Analytics, Oslo, Norway. The company’s Unscrambler suite mines big data stores to improve quality and efficiency of output in the pharmaceutical and biotech industries.

Another acquisition was OptiPlant, Walnut Creek, Calif. Its technology focuses on helping EPCs to increase agility and speed of conceptual and front-end engineering design development, dramatically reducing the engineering effort involved. AI-driven 3D conceptual plant layout with parametric asset models and automatic pipe routing enables closer collaboration between owner-operators and EPCs to improve accuracy, reliability and speed of cost estimates from the earliest project stages.

“OptiPlant and Camo can be complementary to Hybrid Models because their focus is different. For example, Aspen Unscrambler from Camo and Aspen ProMV can be used in combination with Aspen Hybrid models. They can be used to analyze the data and identify which are the most relevant variables in a process — then select the data used to create the hybrid model and make the process easier and the model more accurate,” concludes Munoz.

Seán Ottewell is Chemical Processing's editor at large. You can email him at [email protected].