Predictive Analytics Capture Heartbeat of the Plant

Air Products is a world-leading industrial gases company that provides atmospheric and process gases and related equipment to a variety of manufacturing markets. With over 750 production facilities, 1,800 miles of industrial gas pipeline and operations in more than 50 countries, the company clearly understands that asset safety, reliability and efficiency are key to value generation. To this end, Air Products has increasingly focused on extracting further insight about asset performance from process data.

The fundamental operational questions that data-driven decision-making potentially can address lie at the intersection between asset optimization and reliability. Often, optimizing asset efficiency can detrimentally impact reliability and vice versa. The only way to objectively determine the optimal tradeoff is through the discrimination of data. Some representative questions plant managers often pose and data-driven decision-making ideally should answer include:

• Should I run this fixed-bed reactor at higher temperatures to achieve a greater conversion? If so, how will this affect my catalyst activity as well as the overall mechanical integrity of the reactor?

• When should I service the intercoolers of my multistage compressor given the tradeoff between maintenance cost and the potentially degrading isothermal efficiency of the compressor?

• Can I can extend the time between plant outages without increasing my unplanned maintenance cost?

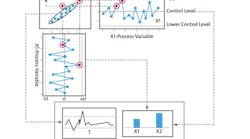

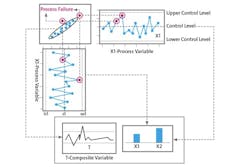

Figure 1. Use of a composite variable T provides insights hard to spot directly from individual variables X1 and X2.

The list goes on. The groundswell in interest throughout industry in “big data” suggests that objective, agile and insightful answers to these operational questions now potentially are within grasp. Certainly, digital control systems, data historians and additional sensor monitoring points made possible through advancements in the Industrial Internet of Things (IIoT) provide manufacturing companies with an unprecedented amount of data. However, these high-dimensional datasets often are characterized as having a challenging signal-to-noise ratio and a high degree of correlation/redundancy, along with being non-casual in nature (i.e., a change in a single sensor reading doesn’t always enable drawing a causal conclusion about altered process conditions — instead drawing a causal conclusion requires looking at a combination of factors), which ultimately leads to an indirect, incomplete representation of overall asset performance. As a result, companies paradoxically can have increased process observability but still remain unable to translate those acquired data into a deeper understanding of evolving asset efficiency and reliability.

For highly integrated processes having feedback and recycle components, a single univariate sensor profile seldom suffices for anticipating or diagnosing process interruptions or equipment failures. More often, predicting or spotting deviations in these complex process operations typically requires understanding the interdependence of several factors, many of which are individually identified by discrete process sensors. However, the interdependence of such sensor information and the contribution to a process deviation frequently is not obvious or feasible to monitor through human observation alone.

Advanced analytics in the form of state-of-the-art statistical multivariate techniques can de-noise process signals as well as capture the correlation among these signals, transforming huge plant datasets into actionable information. The Computational Modeling Center within Air Products’ Global Technology organization has developed ProcessMD, a patented, web-based, predictive-monitoring and fault-diagnostic platform, to provide foresight and insight about asset performance.

“Data is a core, strategic asset, and we have built the digital solution under our ProcessMD brand in order to unlock value from these data streams,” explains Brian Farrell, Air Products Global Technology Director.

Underlying Technology

At the foundation of the platform are predictive models built from multivariate techniques such as projection onto latent structures (PLS) and principal component analysis (PCA). These machine-learning techniques look to perform a variable transformation that, in doing so, robustly handles sensor redundancy/correlation, measurement error and more — enabling effective defect detection that may have gone unnoticed through classical, univariate statistical quality control techniques.

In some cases, a process may not perform properly even though all process variables have values within the expected ranges. For example, the data represented by X1 and X2 in Figure 1 fall within accepted upper and lower control limits. However, a composite variable T, defined through a multivariate model that captures the underlying positive relationship between X1 and X2, indicates when that correlation has been violated leading to an off-spec scenario. These data-driven, auto-adaptive models provide advanced alerts of subtle abnormalities and deviations from expected behavior across a whole plant or its specific components. The predictive analytics focuses on diagnostics to enable proactive intervention.

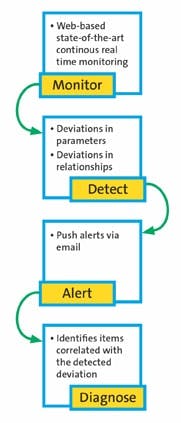

Figure 2. The software provides a cohesive platform to monitor operations, detect deviations, send out alerts and diagnose issues.

The ProcessMD platform continuously monitors process operations in real-time through distributed computing technology to provide Air Products engineers with a multi-tier perspective of process and equipment health (Figure 2). A summary dashboard allows for the rapid visual evaluation of equipment/process health over the various distributed assets. When an issue arises, the engineer can quickly deep-drill to the specific equipment/process of interest and then leverage the multivariate techniques to ascertain a likely root cause. ProcessMD also proactively sends alert emails to notify pertinent stakeholders about an emerging issue. The platform currently handles equipment and process condition monitoring for Air Products’ geographically dispersed and constantly evolving manufacturing fleet.

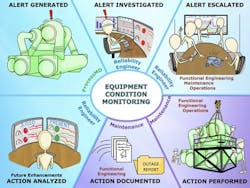

Equipment condition monitoring entails developing empirical models that capture the health of a piece of equipment at varying levels of granularity. These inferential models are used to conduct autonomous, real-time monitoring of both fixed and moving equipment through adaptive control limits that track such monitoring points as motor-winding and bearing temperatures, vibration levels and more. The proactive alert-escalation framework then engages relevant plant personnel with an emerging issue prior to a mechanical failure or plant trip, while the advanced multivariate diagnostics enable machinery and reliability engineers to objectively evaluate the burgeoning issue (Figure 3).

Process condition monitoring seeks to capture the “heartbeat” of the process first by decoupling external influences from internal process levers to identify actionable performance degradation. The modeling extends to capturing process variability at a subsystem and individual equipment level (Figure 4). Data-driven models developed within this hierarchical framework enable tracking and rationalizing the variability in key process indicators along with holistic multivariate metrics that help establish relative process baselines. Interactive escalating alerts warn of deviations; deep-drilling multivariate diagnostics foster fast and efficient identification of the root cause of the deviation.

Impressive Results

The ProcessMD platform had its first field deployment at one of Air Products’ HyCO (syngas) plants in 2010. Today, it monitors hundreds of the company’s plants and thousands of fixed and moving pieces of equipment located at those respective sites — contributing on average multi-million-dollar annual cost avoidance and productivity benefits. It has garnered hundreds of site testimonials.

As the world’s leading hydrogen supplier, Air Products owns and operates numerous steam methane reformers (SMR) and the world’s largest hydrogen distribution network. The energy-intensive high-temperature reforming process leads to many efficiency and reliability opportunities. For example, it is important to maintain the efficiency and reliability of the forced-draft fan to ensure sufficient flow of air for fuel combustion, which provides the necessary heat to drive the endothermic reaction occurring within the reformer tubes. At one Air Products site, ProcessMD proactively identified that the windings in the motor driving the forced-draft fan were warmer than they should be and alerted reliability engineers. An inspection by the site maintenance team revealed dirty filters were restricting cooling air flow. Once the filters were replaced, the motor winding temperature dropped, preventing an overheating of the motor components along with associated collateral damage such as an unplanned SMR shutdown.

Figure 3. The platform not only alerts operating personnel to an emerging problem but also provides troubleshooters with multivariable diagnostics.

Another example of equipment condition monitoring at work for the SMR process was the detection of high vibration readings on a boiler feed-water pump at another site. Because the boiler generates the steam necessary for the SMR process, a disruption in feed water flow would severely curtail production rates. It is difficult to identify anomalous vibration readings due to their dependence on multiple factors such as loading. However, multivariate techniques can capture the underlying relationships and detect when vibration levels are exceeding model-based expectations. Through ProcessMD oversight, the plant was able to proactively switch to a secondary pump prior to an SMR trip. Further evaluation of the original pump revealed bearing wear that was contributing to increased vibration readings. The preemptive intervention avoided collateral machine damage with potential costs in excess of hundreds of thousands of dollars.

SMR plants also offer substantial opportunities for process condition monitoring. Their heat integrated design means that fouling of heat exchangers can significantly impact overall process efficiency. Assessing the relative impact of incremental degradation in a single heat exchanger on the overall process can be challenging, though. Changing feed composition, process operating temperatures and production rates make breaking down process variability and assigning it to appropriate contributors daunting. However, the hierarchical modeling approach can address this operational challenge. For example, ProcessMD not only detected a reduction in export steam but also subsequently attributed the loss of export steam capability to the fouling of a heat exchanger, which was further corroborated by an observed reduction in the heat transfer versus design. Maintenance on the heat exchanger restored the export steam capability.

At another SMR site, ProcessMD determined that a combination of both heat exchanger fouling and increased pressure drop on a downstream process unit affected the plant’s ability to meet expected production rates. After maintenance on the respective units, production rate returned to normal.

Unlocking Value From Data

All manufacturing facilities can have equipment and process issues that cause costly downtime and expensive maintenance. Unfortunately, the settings of control system alarms on single measurement data points often are too wide to provide meaningful early warning of a condition to avoid an impending equipment failure or process interruption. Multivariate techniques that capture the correlation within the dataset therefore are critical to proactively identifying these issues.

Figure 4. Users can check process variability at an overall plant, subsystem and individual equipment level.

With the advent of the IIoT, manufacturing companies now are amassing large datasets. Sensors on all manner of equipment such as compressors, pumps, motors, expansion turbines, blowers, cooling towers and heat exchangers are streaming data to process historians at high rates. Advanced data analytics enable the transformation of these enormous datasets into reduced dimensional, composite variables that take into account the inherent characteristics of the current operating condition, capturing the heartbeat of the process. For Air Products, ProcessMD is that asset doctor — diagnosing process issues and unlocking value from data.

Air Products is planning to offer this technology to customers in the form of a Platform as a Service (PaaS) for real-time asset performance management and diagnostics. This new digital IIoT offering will seek to provide differentiated process and equipment insight in an open-loop, advisory fashion, which an operator/engineer then can leverage to make informed operational decisions to minimize unplanned maintenance and concurrently maximize process reliability and efficiency.

PETER M. VERDERAME is a senior principal systems engineer within the Computational Modeling Center at Air Products, Allentown, Pa. SANJAY MEHTA is the manager of the Computational Modeling Center. E-mail them at [email protected] and [email protected].