Define, Scope and Scale Improvements Via Operational Analytics

Across industry, “disruption” is the word often used to describe a fundamental of today’s digital economies. It encompasses a broad set or market characteristics that have come about due to improvements in technology. Computing power is cheap and mobile, data is ubiquitous and easier to access than ever, and physical objects can increasingly connect and communicate.

Executives are deploying technologies such as smart, connected things; wireless networks; cloud computing; digital twins; and analytics to modernize, improve and transform their business processes, services, and cities.

There are now many paths to obtain improvements using data. However, there is no single, “right” path, nor does every operational area need to reach some transformational state of maturity. In fact, that may be overkill for parts of operations. Faced with this reality, executives are finding it difficult to understand how to define, scope and scale improvements using operational analytics. As a result, many organizations are still working through what to use, where to use it and when to stop.

[callToAction ]

This article discusses the role and value of existing and new systems and solutions for improving industrial operations via data and analytics. To help frame the discussion, we provide a maturity model for operational analytics.

Why Companies Focus on Analytics

Analytics can underpin any endeavor in which data is produced. In today’s increasingly connected, digital world, that means they are applicable virtually anywhere. Look no further than historians, connected robots in plants, smart meters in electrical distribution infrastructure and energy management sensors in government buildings.

The benefits of analytics are evident. From creating new business models and achieving margin-boosting operational optimization to supporting consumer choices for energy consumption and reducing pollution, the effective use of analytics can drive benefits across the enterprise, including:

• Revenue generation from new methods for serving existing customers and ways to reach new ones

• Margin protection through value-added aftermarket services to offset the increasing commoditization of physical equipment sales

• Asset and process optimization through improved, proactive, and highly-automated management of infrastructure, resources, and capital

• Higher satisfaction and retention by engaging customers with highly-valued products and services where and when they need them

• Operational flexibility and responsiveness that comes from using data to improve the agility, speed, and accuracy of decision making

By embracing analytics to help drive better decisions and operating performance across all areas, organizations have an opportunity to modernize and transform their businesses to compete in 21st century digital economies. The difference now is that many organizations are looking to integrate more sources of new, complex and often real-time data for analytics.

They are also looking to combine that information with the performance data they have been gathering for decades. The data is structured and unstructured, and represents a dizzying array of information in many formats—assets, historians, logs, paper, pdfs, backbone operating systems, financials, spreadsheets, pictures and video, audio, weather, social media, etc.

Many Paths to Operational Improvement

The marketing hype surrounding new solutions, the role of legacy technology, and differences in analytics techniques make it challenging for business and operations executives in industry to understand how to move forward. Too often, the discovery process for doing so gets bogged down or derailed by complex discussions on technology and technique.

There are many different types of operational systems and solutions, both new and legacy. Each has a role in bringing value to the operations, from gathering and storing critical data to extracting and automating insight from it. To understand what each is trying to do, it is helpful to first examine what area of the operation is being impacted and what systems are commonly found within them. These include operations planning, process control, asset performance and operating performance.

It is also helpful to align those areas of impact with appropriate techniques and the associated systems and solutions. ARC has identified three overarching ways these can provide value:

• Visibility and context: Improves intelligence about performance through descriptive analysis, visualization, and measurement

• Applied math and statistics: Uses traditional mathematical techniques and designed models to enable comparison, simulation and control

• Machine learning, artificial intelligence, and cognitive analytics: Uses models and algorithms to automate into operations the human capacity for recognition

Technology Overshadows Objectives

Executives in virtually every industrial segment have been exploring how to best leverage these techniques to improve operational and business performance. Some markets, particularly consumer-facing ones, have made rapid progress, built upon the strong foundations of customer segmentation and technology supporting personalized, multichannel service.

Accelerated by connected devices and data proliferation from the Internet of Things (IoT), businesses are still at the front end of adoption of certain analytics methods and techniques.

The solutions are far ranging, such as enhancements in traditional statistical methods, model-based techniques that include streaming data, and the use of machine learning. Additionally, some of the techniques can also applied for specific effect, such as natural language processing and semantic search.

Yet, simultaneous technology advancements have caused a good deal of confusion in the marketplace. Advancements in analytics, particularly predictive and real-time techniques, have coincided with the emergence of industrial IoT and application platforms. The result is that buyers often don’t understand where and how solutions can be applied to optimal effect, nor how to best integrate legacy technology investments. Also, they are uncertain as to whether solutions are ends in and of themselves, or just set the stage for additional improvement. The reality is that both can be true.

From an analytics perspective, the array of terms can be overwhelming. Anomaly detection, cognitive computing, prescriptive analytics, dynamic visualization, artificial intelligence, fog and edge computing, supervised and unsupervised learning, unstructured data, and machine learning are just a small sample of the many terms, often loosely defined, buzzing about the market.

For IoT, the term “platform,” has become ubiquitous in marketing, frequently applied to a variety of technologies, including analytics. Device connectivity, standalone analytics, and cloud-based platforms with IoT functionality are often difficult to distinguish. Capabilities often overlap or are poorly distinguished via marketing.

The technological terms associated with analytics and IoT no longer reside solely within the purview of data scientists and information technology groups. As operations personnel and business executives have been tasked with building business cases, particularly for analytics, they find it necessary to understand technology definitions and distinctions, many of which are nuanced.

The result are business cases and buyers heavily focused on sorting through technology questions. This focus typically centers on four areas, causing analytics projects to devolve into technology discussions, pulling them away from business transformation objectives. These areas are:

• Techniques: A common starting point often revolves around comparing different emerging techniques, as these really are the change enablers for digital transformation, along with IoT. Generally, advanced analytics methods are lumped under broad umbrellas of predictive and prescriptive methods. We hear stories from many buyers, comfortable with traditional means of comparison, who have endured pitch after pitch and ended up unable to compare solutions in meaningful ways.

• Users: In a time of “do more with less” thinking, adding head count, whether via full-time employees or contracted data scientists, isn’t palatable or sustainable. Buyers often get bogged down trying to select a solution that seems to limit strain on internal subject matter experts and/or avoid adding costly data science skills. The potential downside is that the value of analytics might be limited as a tradeoff for reducing organizational disruption.

• Tools: Hand in hand with the user issues noted above, self-service tools have proliferated as a natural product development extension for analytics solutions. To support role-based use, many providers are accelerating their development of tools and “sandbox” environments for data scientists and citizen data scientists (engineers leveraging algorithms, statistics, and modeling). An example is rich data visualization and blending, where data streams can be added, connected, and deployed via drop-and-drag methods. These tools are powered by an underlying analytics engine, so it is easy for the citizen data scientist to create and visualize more “aha” moments using data, algorithms, and models.

• Delivery: As organizations grapple with cloud-based solutions for software (SaaS), platforms (PaaS), and infrastructure (IaaS), those discussions often run into existing traditional IT structures and service agreements models that make change difficult. While some of these barriers are clearly valid (such as regulatory compliance), they often limit or delay innovation in problem solving.

Mapping Analytics to a Maturity Model

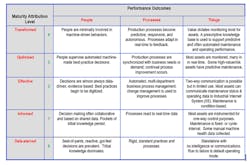

A good way to reorient analytics discussions firmly around value is to look at them through the lens of their desired outcome. This can be done by applying a maturity model that breaks down analytics by their impact on people, processes, and things. This outcome-based model better demonstrates what can be achieved in comparison to a technology-focused lens.

A maturity model helps companies move away from often-ineffective comparison shopping to be able to focus on their business transformation objectives. Analytics users will find it easier to determine where any one technology system or technique fits in terms of moving an organization to a more mature state. This is especially helpful as most every organization is likely to have wide variations in operational analytics maturity.

Beginning with level zero, ARC classifies operational analytics maturity in five maturity stages as it relates to people, processes, and things:

Value of Iterative Improvement

Maturity is iterative, and many technologies are fundamental enablers for additional improvement, rather than a desired end state, and progress doesn’t need to be equal on all operational fronts. By pursuing iterative improvement, organizations will reach a point where it becomes obvious that additional improvements aren't worth the cost or drain on resources. In fact, striving for transformational change may be overkill for parts of operations, leading to open-ended pilots and overspending.

For example, a best practice will be ideal, but it also might be fine for people to carry it out reactively in applications where something like artificial intelligence might not fit. Or, one-way communication and control of a thing might also be sufficient if it can be determined that there is no more value to be gained..

Consider an organization burdened with a dozen or so siloed work and asset systems, supported by workers that use spreadsheets as part of process and asset decisions.

Eliminating manual analysis and pulling data from disparate systems into a real-time system of record for assets doesn’t, in itself, define a transformed operation. However, it does set the foundation for maturational improvements across people, processes, and things.

Work requirements can be more fully understood and aligned to craft skills and availability, enabling higher resource utilization and eliminating costly rework. Analysis of work can set the stage for continual process improvements that extend the lifecycle of assets or improve service reliability. Asset data can flow into capital planning processes to improve investment strategies or stretch budgets. In making these improvements, the organization matures some operational components from informed to effective.

By doing so, an organization can then determine if there is opportunity to automate failure identification via machine learning, for example, perhaps setting the stage for implementing predictive maintenance strategies. The type of data used, resources involved, and desired improvement might then inform the technique used; from real-time statistical-driven control to predictive cognitive analytics. Additional technology requirements also become more apparent, as infrastructure visibility and device connection would be fundamental to this improvement.

The result is enabling technology seen through the lens of operational analytics maturity. In this scenario, there is no worst-to-best orientation that then prompts ineffective technology comparisons. Instead, there is only the correct fit based on the desired outcomes.

Greg Gorbach is vice president at ARC Advisory Group. Mike Guilfoyle is director of research. You can email them at [email protected] and [email protected].

The upcoming ARC Industry Forum in Orlando, Florida, Feb. 12-15, 2018, will feature a dedicated track on advanced analytics for manufacturing. To learn what your peers are doing in this dynamic area and participate in the conversation, Chemical Processing readers may want to consider attending the event.