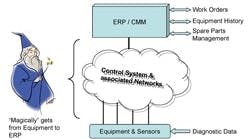

Without communication between the various components of a control system and, just as importantly, the control system and the corporate network (including manufacturing execution and enterprise resource planning systems), it’s impossible to make product. While plants realize that maintenance of field devices and production equipment is crucial, they don’t always pay sufficient attention to the monitoring and maintenance of the network used to interconnect these components. Yet this is essential for high reliability. Unfortunately for most people using these systems, “all the stuff” that makes them work is “magic” and this magic is becoming more complex (Figure 1).

Figure 1. Merlin at work -- Many users view data

handling and the working of control systems and

networks as magic.

Click on image for a larger illustration.

As we all know, if we don’t have a reliable control system with availability as close to 100% as possible, production staff quickly lose faith in the system and circumvent it with jumpers, loops in manual, etc., that defeat the purpose of the investment. Even “finicky” analyzers have a 95% minimum acceptable availability.

A key part of any control system is the infrastructure that carries the signals from the field devices to the controllers — because without signals there won’t be data on which to control nor a means to manipulate the final control elements (valves, drives, etc.) to adjust process operating conditions. However, as the level of expected interconnectivity increases between the various systems, the degree of difficulty in maintaining them rises exponentially.

Essential monitoring

System reliability depends on proper design — which includes taking into account security and regular maintenance. Correct maintenance requires understanding the condition of the system. A single snapshot in time, say, when the system was started up and commissioned, isn’t good enough. Instead, you must gather and compare data over time so you can predict when one or more components are likely to fail.

Regularly manually capturing these data (at a minimum at the vendor-recommended intervals) forms the basis of a preventative maintenance program to verify everything is within tolerances and hopefully to spot when a component in the system needs to be replaced. The data collected this way normally then have to be manually keyed into appropriate software and, in most cases, interpreted by a knowledgeable end user. Unfortunately, this is labor- and time-intensive, and depends upon staff expertise, which is growing increasingly scarce as old timers retire.

Fortunately, the same systems that form the networks now have components, tools and software to monitor the networks in real time and provide analysis of the data to alert you that the system is degrading well in advance of an incident. In addition, because control systems are incorporating more and more “Commercial Off The Shelf” (COTS) technology as part of their infrastructure, many of the tools used for business networks can serve in the control environment as well.

Figure 2. Network architecture -- Plants usually

layer communications into four levels from field

devices to corporate business systems.

Click on image for a larger illustration.

An “ideal” network-monitoring tool shouldn’t impact the network it’s watching. However, in practical terms this isn’t possible. So, we aim to minimize interruptions or traffic on the network by operating in passive or monitoring mode and simply recording the information flowing by or through the tool, which in many cases is software residing either on a computer or dedicated server/appliance on the network. One way to minimize the impact on the network is to transmit the data being gathered via a separate or parallel network. In the case of Level 0 and Level 1 (Figure 2), wireless networks are starting to handle some of this parallel data transfer. Wireless networks have a different range of conditions, constraints and considerations than conventional or wired networks (see Wireless comes with strings below).

Maintaining reliability

It’s often the “little things” that can create the greatest grief — this certainly is true for network systems. Surprisingly, terminations, which everyone takes for granted, are one of the largest causes of problems. Difficulties can develop if the terminations aren’t properly torqued, or through vibration, someone tripping over a cable, corrosion (because the unit isn’t properly vented or the vent becomes plugged), short circuit (this one will often manifest itself right away), surge and associated damage, or electromagnetic or radio frequency interference. So, it’s critical that this backbone of the system be properly installed and maintained.

Fortunately, the majority of the analytical tools on the market, especially if they’re connected online, can catch many post-start-up problems as they develop and before they cause a process interruption.

A common practice to maintain high levels of reliability is to install redundancy. This approach is often employed at Levels 1 and 2, and, occasionally for special applications such as safety systems, at Level 0 as well. Redundant systems have duplicate hardware identically configured (I/O points, software, etc.) continuously monitoring the operating condition of each other, so that if one unit fails the other is ready to assume full operation without interruption to the process.

This fail-over capability is often used as a means to install system upgrades. When an upgrade needs to be made, one of the redundant units or nodes is taken offline, the upgrade (typically software) is installed and the upgraded unit is brought back into service as the standby or secondary system. The primary system (which still has the old software release) then is forced to fail-over, so the backup unit with the upgrade installed becomes the primary controller and the upgrade can be made to the second unit.

Another practice to maintain high reliability is to have a test bed that has a similar architecture and layout as the installed control system, with at least one of each component of the Level 1 and 2 system being used and running. This serves two purposes: first, it provides a set of spare parts that are known to be functional and at the same software and firmware revision levels as the running plant and, second, it’s where new software updates are first tested by plant staff, to verify the integrity of the upgrade as well as to check the procedure developed for the upgrade. Only larger facilities typically have their own test beds. However, every distributed control system (DCS) supplier uses just such a system as part of its quality assurance, development and release process.

Today, software largely is COTS technology, predominantly Windows. This brings with it the associated task of managing all the patches and upgrades for the operating system and also for related software such as virus scans, firewalls, etc. Unfortunately, as yet there isn’t an automated tool to help. You must work closely with your host system supplier because, in many cases, a change to the software also can alter system settings required for control communications. The best form of security here is to subscribe to the support program of your system software suppliers and have them do the necessary testing to verify that any upgrades won’t impact your system. Regardless, test them on an offline system first.

Protecting the investment

Any capital spending on your control system undoubtedly had to promise an acceptable return on investment (ROI). Without reliable signals you don’t have trustworthy control — and you imperil your investment. So, there’s significant economic incentive to maintain the system so you can achieve the ROI. More than that, though, any control system has a risk management aspect — that is, dealing with the threat of unplanned outages and, just as importantly, effectively managing the frequency and duration of each stoppage of plant production. A predictive maintenance system and associated data are the keys to being able to accurately forecast when a device is likely to fail — allowing for proactive action at a convenient time instead of an emergency outage resulting from a component failure. The system must be able to analyze and interpret the data and then act, such as, at a minimum, initiating the appropriate work order for any required repairs.

Independent research has shown that predictive maintenance typically costs only one-fifth as much as preventive maintenance. However, predictive maintenance systems need to be properly installed and maintained, often by fewer people than in the past but by those with higher skill sets.

Today’s facilities are truly integrated operations, with modern digital systems often extending from the sensors through the business network and beyond to key outside suppliers. As such, they play a key role in keeping a company competitive. However, to be effective, the systems must get proper maintenance.

Wireless comes with strings

Wireless mesh networks are starting to gain greater acceptance at plants and are changing the communications landscape for industrial networks (see “Wireless Starts to Mesh,” www.ChemicalProcessing.com/articles/2008/208.html). Such mesh networks, which generally rely on low-cost battery-powered devices, add significant benefit such as flexibility in change-outs, faster uptime, increased mobility and lower installed costs. Wireless gives plant management access to data historically not available — turning data into strategic information.

Just like its wired counterpart, though, a wireless network requires a certain amount of care and maintenance to retain peak operational performance. While a mesh network should be “hands-free” because it automatically organizes and manages itself, in reality you always need to have a clear picture of what the mesh is doing. For instance, you should continually check latency (the length of time it takes data to move from one point to its intended destination). Basically, the more nodes that connect, the more bandwidth needed.

Commercially available software can monitor the mesh in real-time. At a minimum, the software should give visibility to all interconnections, provide upgrade capability for new firmware and enable control over the dynamic configuration mode and security of the mesh network. Software allows tuning, troubleshooting and maximizing overall network performance. It continuously observes the health of the sensor and system, including batteries, to enable predictive maintenance and hence the high reliability expected of the system.

Valuable resource

ANSI/ISA-95, “Enterprise-Control System Integration,” provides standard terminology and a consistent set of concepts and models for integrating control systems with enterprise systems that will improve communications among all parties involved. The models and terminology emphasize good practices for integrating control systems with enterprise systems during the entire lifecycle of the systems. The ISA-95 committee has developed and is continuing to work on a multi-part series of standards that defines the interfaces between enterprise activities and control activities, focusing predominantly on the interface between Layers 2, 3 and 4.

Ian Verhappen is Edmonton, Alta.-based director, industrial networks, for MTL Instruments. Frank Williams is CEO of Elpro Instruments, San Diego, Calif., a subsidiary of MTL Instruments. E-mail them at [email protected] and [email protected].